Media’s civil war over AI

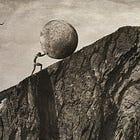

It's time to draw the line.

Embedded is your essential guide to what’s good on the internet, written by Kate Lindsay and edited by Nick Catucci.

Did not realize today’s post would end up being a counterpoint of sorts, but that’s simply one of the delightful coincidences of being human!!! —Kate

New on ICYMI: Tell the Bees joined us to talk Chappell Roan, but really, what’s behind the hate campaigns that tend to plague famous women.

Head over here to subscribe to ICYMI wherever you listen to podcasts 🫶

AI has always been a worker issue. Almost as soon as LLMs began rolling out to the public, data analysts and consulting firms and even the AI companies themselves started regularly publishing reports on what jobs AI might replace and when. Now, companies like Oracle are conducting layoffs so vast, they’re being done via automated emails in order to reach all of the estimated tens of thousands of employees being axed as the company increases spending in AI.

The risk of any given job being lost to AI depends on its exposure to it. How many of the role’s most important activities could be replaced with or are already assisted by AI? Most of these mass, headline-making layoffs have affected tech jobs. In 2023, around 32% of workers in “information and technology” believed AI would help them more than hurt them; 11% felt the opposite. It seems safe to assume, then, that a good number of these employees began incorporating (or were forced to incorporate) AI tools into their workflow—and perhaps unintentionally demonstrated that they, the human, were the most expensive and least effective part of the equation.

I worry the tech industry’s largely unopposed embrace of AI tools is playing a role in expediting its human workers’ obsolescence. I also worry the same thing is about to happen in journalism.

After tech, media jobs like advertising, content creation, technical writing, and journalism tend to be next on those lists of roles most threatened by AI. For a while, journalists seemed largely united in their opposition to using generative AI. But last month, the dam started to break.1 The Wall Street Journal profiled Fortune editor Nick Lichtenberg, who is “all in” on AI, using it to help write seven stories a day (my thoughts on that can be found here). New York Times book reviewer Alex Preston was let go after using an AI tool, which ended up pulling verbatim from a Guardian review of the same novel he was writing about. The book Shy Girl by Mia Ballard was pulled from publication after widespread claims that Ballard used AI to write the book without disclosing it.

The threat of AI to media is obvious, but the industry’s stance on it seems to be rapidly diverging. Use it at Fortune and you’ll be profiled in the WSJ; try it at the New York Times and you’ll be fired. In one case you proudly disclose it. In the other, you attempt to get away with it. We haven’t as an industry decided where we draw the line, because, as it’s becoming clear, we aren’t even all talking about the same thing.

AI—artificial intelligence—is a broad category. It simply means any computational system that can simulate human intelligence, quickly parsing large swaths of information or recognizing patterns. It was not invented in 2022 with the release of ChatGPT. That, along with Claude and Grok and Sora (RIP) and the like, is generative AI. Generative AI is a subcategory of AI that is trained on pre-existing data to generate entirely new text, images, video, and software code. But since generative AI was the first time we, as regular people, had an everyday reason to use the term “AI” at all, the two seem to have been conflated.

Personally, I see AI as a tool for rabid capitalism and a threat to workers and therefore I feel my opposition to it falls squarely within my leftist values. But unless we’re clear on what that specific threat is, we’re not going to be able to advocate against it without coming off ill-informed.

In reality, journalists have been using AI for at least the past ten years, mainly through transcription services. We just never called them by their name. But now some people’s knee-jerk distaste for AI means journalists and websites using tools that have been staples of the industry for over a decade are being retroactively branded as traitors—for instance, some people were disgruntled by the fact that the transcript for our viral ICYMI ep was automatically generated by AI, something that is and has been true for almost every podcast you’ve listened to for years. It’s creating division during a time when we most need to be organized.

We need to decide where we draw the line with AI use, so here’s mine: I use automated transcription services for every interview, and while I won’t use an LLM for research or to automate any of my administrative tasks or do whatever “vibe coding” is, I do not care if anyone else does. While this may go against someone’s personal values, I do not see this to be in contradiction with the values of journalism.

Generative AI, however, goes against the very tenets of journalism. Generative AI is trained on the work of other people. I think using generative AI for your writing is plagiarism.

Generative AI also erodes worker solidarity. LLMs like AI agents are the new hires companies pay less money to do more work. I think the journalists using generative AI to eventually replace themselves and the rest of us are scabs!

There’s likely a contingent of people who take a “them’s the breaks” attitude to this sort of thing. And it’s true: transcription services and built-in spell-checkers have already made certain roles in journalism precarious or obsolete. But a wholehearted embrace of generative AI for our writing risks eliminating all of the roles, and I invite anyone who thinks it’s unrealistic to codify job security to go and try to fire a tenured teacher in the New York City public school system.

Journalism is a public utility, one our current moment needs desperately as the media outlets that don’t end up shuttering are instead consolidated under the ownership of one of three companies with political agendas and who now control the flow of information—agendas that the AI companies behind these generative AI tools gunning to replace us appear to agree with. The new AI overlords may be coming, but I, for one, will not welcome them.

Yes I just saw Hoppers.

"Journalism is a public utility," as admirable as it sounds, doesn't seem to be a perspective shared by most media companies, except for maybe the nonprofit ones. (And we don't really want journalism to be a public utility under an authoritarian administration...)

At ONA last week, some of the largest global newsrooms spoke about encouraging AI adoption across the all teams, mostly to support admin and analytics tasks rather than writing and reporting. And pretty much every media company is cool with using AI-enablement to run ad tech / juice audiences if the revenue numbers go up. The disconnect between the business side and the edit side is wild here, unless you're at Fortune.

Generally it doesn't seem that the problems that internet writers, workers, and consumers care about in re: AI use are the same problems that media companies care about... which might be why it's time to build some new media companies.